If you have spent any time inside a B2B marketing automation platform that has been running for a year or more, you have probably encountered it: a campaign program that technically works but is nearly impossible to understand. The folder structure made sense to someone at some point. The naming is inconsistent or absent. The logic runs, but the reasoning behind it lives entirely in someone’s head.

This is one of the most common things we see when working with B2B marketing teams. MAP instances that have become difficult to navigate, support, or hand off — not because anyone did anything wrong, but because programs accumulated without a shared standard to hold them together.

The causes vary. Some programs were built under deadline pressure with the intent to clean them up later, but then later never came. Others were built carefully by someone who kept all the context in their head and never had reason to write it down until they changed roles or left the company. Some teams grew faster than their processes did, adding people and campaigns without establishing a common way of working. Often it is a combination of all three.

The causes vary. Some programs were built under deadline pressure with the intent to clean them up later, but then later never came. Others were built carefully by someone who kept all the context in their head and never had reason to write it down until they changed roles or left the company. Some teams grew faster than their processes did, adding people and campaigns without establishing a common way of working. Often it is a combination of all three.

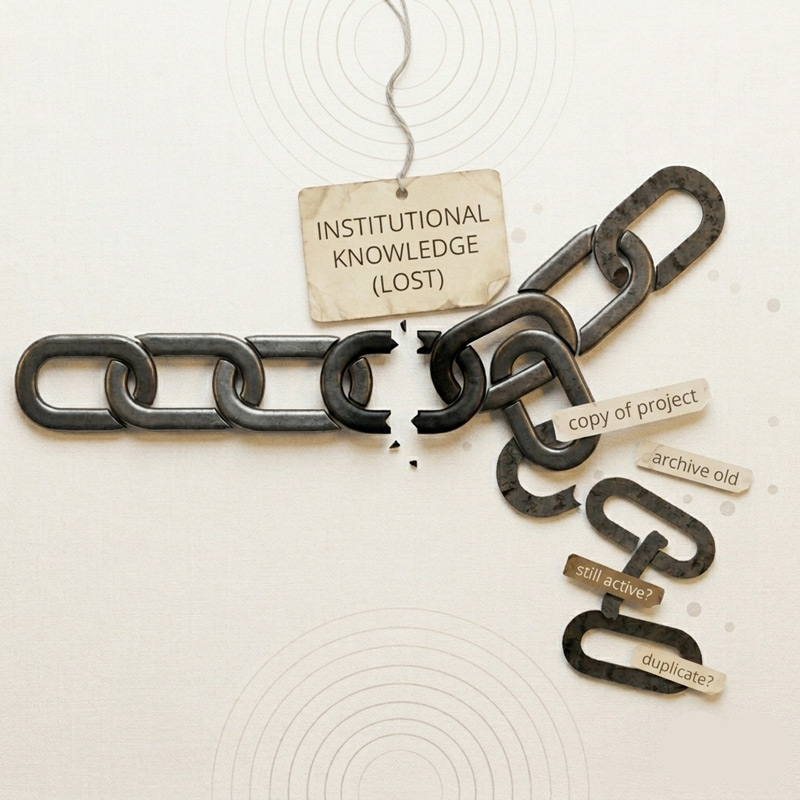

The result is the same regardless of cause: programs that are hard to troubleshoot, reporting that requires manual cleanup, new team members who spend weeks reverse-engineering what exists before they can contribute, and a quiet but real business continuity risk. If the people who understand how things work leave, that knowledge walks out with them.

None of this is inevitable. This post covers the four areas where operational standards make the biggest difference: program structure, naming conventions, pre-launch QA, and documentation.

1. Program Structure and Architecture

How programs are organized inside the MAP before a single email goes out sets the tone for everything that follows. A well-structured instance is navigable by anyone on the team. A poorly structured one requires institutional knowledge just to find things.

Folder structure

Most MAP folder structures start with good intentions and gradually become a reflection of organizational history. Campaigns from three years ago sit next to active programs. Test programs are indistinguishable from live ones. Nobody is sure what is safe to touch.

Good folder architecture follows a consistent logic that is typically organized by program type, then year or quarter, then campaign name. The specific structure matters less than the fact that it is agreed upon, documented, and consistently followed.

Standard program templates

Every MAP supports recurring program types: email sends, nurture tracks, webinar programs, event follow-ups, content syndication programs. Each has a predictable set of components: assets, lists, logic rules, and triggers (Marketo calls these smart campaigns; HubSpot calls them workflows; Pardot uses Engagement Studio). Build a standard template for each type and use it every time. The benefits compound: faster build times, fewer errors, easier QA, and programs that anyone on the team can support because they follow a familiar pattern.

Active vs. archived: a clear policy

Define what active means and how programs get retired when they are done. An instance where old programs are still technically live but nobody is certain what they are doing is an instance where things break in ways that are hard to diagnose. Assign a clear owner to every active program (not a team, a specific person) and do a regular cleanup to archive what is finished.

2. Naming Conventions and Taxonomy

Naming conventions are not just organizational tidiness — they are the foundation of reporting. If program names do not follow a consistent structure, you cannot reliably pull performance data by campaign type, quarter, channel, or business unit. You end up doing manual cleanup every time you need a report.

A practical naming convention framework

A workable naming convention for most B2B MAP instances includes these elements in a consistent order:

- Date: YYYY-MM or YYYY-Q# — puts programs in chronological order automatically

- Program type: a short abbreviation such as EM for email, WB for webinar, NR for nurture, CS for content syndication, EV for event

- Campaign name: the specific campaign or theme

- Audience or segment: especially important if your MAP serves multiple products, regions, or business units

Example: 2026-Q2_WB_SecurityBuyersGuide_ENT

The specific format matters less than the consistency. One person who ignores the convention breaks the reporting for everyone.

Apply conventions everywhere, especially UTMs

Naming conventions apply not just to programs but to email assets, landing pages, forms, lists, and UTM parameters. UTMs are where inconsistency most visibly damages downstream reporting. If team members are building UTMs differently, channel attribution data is unreliable. Define a UTM taxonomy, document it, put it in a shared tool the team will actually use, and enforce it without exceptions.

3. Pre-Launch QA

QA is the step that disappears first under deadline pressure. The email has been reviewed, the links look fine, it will be fine. Except the suppression list was not applied, or the smart campaign trigger has an extra filter that cuts the audience in half, or a token is displaying its variable name instead of its value in the live email.

A pre-launch checklist takes fifteen minutes and catches the things that fatigue and deadline pressure cause humans to miss. The cost of skipping it shows up in unsubscribe complaints, CRM attribution errors, and the occasional very public mistake.

B2B Campaign Pre-Launch Checklist

For every email send:

- Send list is correct: right segment, expected size, no anomalies in the count

- Suppression lists applied: unsubscribes, competitors, current customers if appropriate, active opportunities

- From name and address correct and consistent with brand standards

- Subject line and preview text render correctly, tested across email clients

- All links tested and working, including the unsubscribe link

- UTM parameters present and correctly formatted on every link

- No tokens displaying as variable names in the live version

- Send time and time zone confirmed

For nurture programs:

- All wait steps and triggers reviewed for logic accuracy

- Exit and suppression criteria confirmed

- Flow tested end-to-end with a test record

- All assets in approved or active status

For landing pages and forms:

- Form fields map correctly to CRM fields

- Thank-you page or redirect confirmed working

- Hidden fields for UTM capture confirmed working

- Page renders correctly on mobile

- GDPR or CCPA consent language present where required

The checklist only works if using it is non-negotiable. Build it into the launch process as a required step, not a suggestion. Nothing goes live without it completed.

4. Documentation and Handoff Standards

This is the pillar that determines whether your MAP is an asset or a liability when team composition changes. It is also the one most teams skip, and not because they disagree with its value, but because there is always something more pressing.

What documentation needs to exist

This is not about writing a manual for every campaign. It is about capturing the minimum a new person would need to support or troubleshoot a program without tracking down its original author:

- Program purpose: what it does, who it is for, what triggers entry

- Business rules: non-obvious logic decisions, like why certain contacts are excluded, why a threshold is set where it is, why a wait step is 14 days instead of 7

- Dependencies: what other programs, lists, or fields does this rely on and what breaks if something upstream changes

- Owner and build date: who built it, when, and who is currently responsible

- Change log: what has changed since the original build and why

Where it should live

In the MAP where possible. Most platforms support program description or notes fields but they are not always used. For more complex programs, a linked internal wiki page or shared doc works well. The goal is that documentation is findable directly from the program itself, not buried in someone’s personal drive.

The handoff protocol

When someone who owns programs leaves or changes roles, there should be a defined process, not an ad hoc knowledge transfer the week before their last day. That means a review of every active program they own, a walkthrough of anything non-obvious, and a formal transfer of ownership in both the MAP and your documentation system.

Beyond individual handoffs, a regular MAP audit, practiced quarterly for fast-moving teams or biannually at minimum, catches programs with no clear owner, documentation gaps, and naming convention drift before they compound into larger problems.

Where Does Your Team Stand?

Most MOps teams know their campaign operations could be tighter. This framework helps locate where you are and what to prioritize next.

| Level | Structure & naming | Templates | QA & launch | Documentation |

| 1 — Reactive | Built case by case. no consistent naming. | No templates. Each build starts from scratch. | QA informal or skipped. | Context lives in heads. Handoffs are painful. |

| 2 — Emerging | Some conventions exist but not consistently followed. | Informal templates for some program types. | Checklist exists but not required. | Some programs documented. Knowledge still siloed. |

| 3 — Operational | Conventions documented, enforced, and followed. | Standard templates for all major program types. | Required checklist on every launch. | New programs documented before launch. Clear ownership. |

| 4 — Scaled | Full taxonomy enforced across teams and tools including UTMs. | Templates versioned and maintainted. Used without exception. | QA is a gate. Regular MAP audits scheduled. | Formal handoff protocol. Any program supportable within first week. |

Most teams reading this are at Level 1 or 2. Level 3 is achievable for almost any team in a focused quarter. The highest-leverage starting point is naming conventions. You can apply the new standard to new programs going forward and avoid the temptation to retroactively rename everything. Momentum matters more than perfection.

The Standard Is the Work

Campaign operations discipline is rarely what gets celebrated in a pipeline review. But it is what determines whether a marketing ops function scales or spends most of its time firefighting.

The teams that invest in building programs to a standard that is structured consistently, named logically, QA’d before launch, documented well enough for someone else to maintain, spend less time troubleshooting, produce fewer errors, onboard new team members faster, and generate reporting they can actually trust.

Building the standard is a one-time investment. The alternative is paying the compound interest on skipping it, indefinitely.

Related Resources →

Infographic – 16 Components of a Best-in-Class Lead Nurture Program

Measure Your Way to Lead Nurturing Success